Needs-Based Evidence Mapping Report

For a more informed use of digital education tools and services

The European EdTech Alliance launched its latest report, introducing Needs-Based Evidence Mapping — a new approach designed to contribute to more evidence-informed creation, selection and adoption of digital education tools and services across Europe.

Rethinking the evidence debate in EdTech

The report begins from a critical observation:

The current challenges surrounding EdTech evidence are not primarily caused by a lack of evidence, nor by insufficient methodological rigour. Instead, challenges arise because:

Evidence is produced for different purposes

It is interpreted through different professional logics

It is mobilised by actors operating under distinct constraints and accountability structures

As evidence circulates across the ecosystem — from developers to educators, from policymakers to investors — its meaning frequently shifts. Sometimes subtly, sometimes dramatically. The result? Misunderstandings, misplaced expectations, and ultimately, erosion of trust.

A translation device for the ecosystem

Needs-Based Evidence Mapping responds to this challenge by reframing how evidence is understood, organised and interpreted. It is not a new evaluation tool nor a certification scheme or a compliance framework. It is also not ranking evidence hierarchically into “stronger” versus “weaker” evidence.

Rather, it functions as a translation device, helping actors understand what kind of evidence is needed, by whom, and for what purpose. Instead of asking Does this EdTech product have evidence?, the framework encourages more precise and productive questions:

Which needs does this evidence address?

Which questions does it help answer — and which does it not?

What assumptions are embedded in how it was generated?

How might its meaning change as it moves across contexts, actors and purposes?

These questions do not require consensus on methods or metrics, but they do require a shared interpretive language.

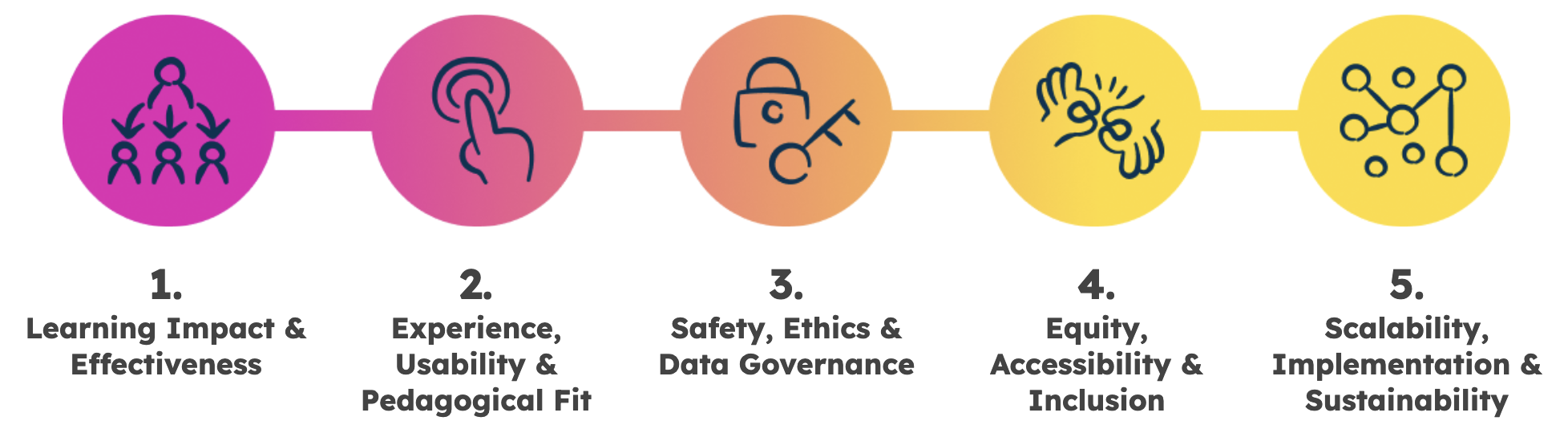

A five-domain typology organised by purpose

At the core of the report is a five-domain typology that organises evidence according to its purpose, not its methodological strength.

Each domain reflects recurring concerns across education systems and markets. Together, they provide a shared interpretive space where teachers, developers, founders, policymakers and investors can situate their own needs, without demanding that others adopt identical evaluative criteria.

By locating evidence within one of the five domains:

The same underlying data can be understood in context

Actors can distinguish between evidence answering different questions

Gaps in evidence portfolios become visible

For example, a product may show strong usability evidence and demonstrate technical scalability, yet lack insight into inclusion or offer limited articulation of learning mechanisms. Anchoring claims within a clear domain makes it easier to recognise when the same data carry different meanings across contexts.

From theory to practice

The report does not remain conceptual. It includes two downloadable templates designed for immediate use by ecosystem actors and use cases for different stakeholder groups:

Educators and school leaders can better understand what evidence says about classroom value and contextual fit.

EdTech companies can structure evidence portfolios transparently, avoiding overclaiming or linear “maturity” narratives.

Policymakers and funders can interpret claims more realistically across diverse decision contexts.

Intermediaries and ecosystem actors can mediate expectations and preserve meaning as evidence circulates.

By aligning evidence types and the data underlying them with the needs they are intended to serve, the framework helps distinguish between different forms of value without collapsing them into a single scale of quality or impact.

In doing so, the EEA aims to contribute to a more mature, transparent and trust-based EdTech ecosystem, one where evidence is not merely accumulated, but meaningfully interpreted.