Why evidence in EdTech is more complicated than it looks

When people talk about “evidence in EdTech”, the conversation often sounds simple on the surface. Does a tool work? Can it improve learning outcomes? Is there proof of impact?

But once you step into the reality of how educational technology is developed, evaluated, adopted, and governed, the idea of evidence becomes much more complex. Evidence is not a single metric or study. It is an ecosystem of data, interpretations, and expectations that travel between educators, companies, policymakers, parents, learners, researchers, and investors.

Needs-based evidence mapping was developed to make sense of this complexity. It provides a way of understanding how different actors use evidence, why they sometimes talk past each other, and how better alignment might be achieved. Three key theoretical ideas help explain this landscape:

the dual logic of evidence

the periodic table of evaluation

the processes of translation and mutation that occur as evidence moves through the system.

Together, they reveal why debates about evidence in EdTech often feel confusing, and how we might approach them more productively.

The dual logic of evidence

A central insight of needs-based evidence mapping is that evidence in EdTech operates under two different logics.

The first is the education logic of evidence. This perspective is rooted in educational research, learning sciences, and professional practice. Its primary concern is understanding learning processes. Evidence is valued when it helps explain why something works, for whom, and under what conditions. It emphasises pedagogical coherence, context, ethics, and professional judgement.

For educators and researchers, evidence often takes the form of classroom studies, case studies, qualitative insights from teachers and learners, or theory-driven evaluations. The aim is not just to demonstrate that an intervention works, but to understand the mechanisms behind learning and how they interact with real educational environments.

The second is the market logic of evidence. Here, the focus shifts to decision-making under uncertainty. Evidence becomes credible when it enables actors such as investors, procurement teams, or school systems to decide whether a product is viable, scalable, and safe to adopt.

In this logic, evidence often takes the form of product performance metrics, adoption data, user retention statistics, compliance audits, or interoperability certifications. These artefacts act as signals of reliability and maturity. The market logic prioritises comparability, scalability, and risk reduction rather than deep pedagogical explanation.

Neither logic is inherently right or wrong. Each reflects the needs of different actors operating under different constraints. However, problems arise when evidence produced for one logic is interpreted within the other. A usability pilot might be read as proof of learning impact, or a compliance certificate might be mistaken for ethical assurance. Misalignment emerges not because actors disagree about evidence, but because they are asking different questions of it.

The periodic table of evaluation

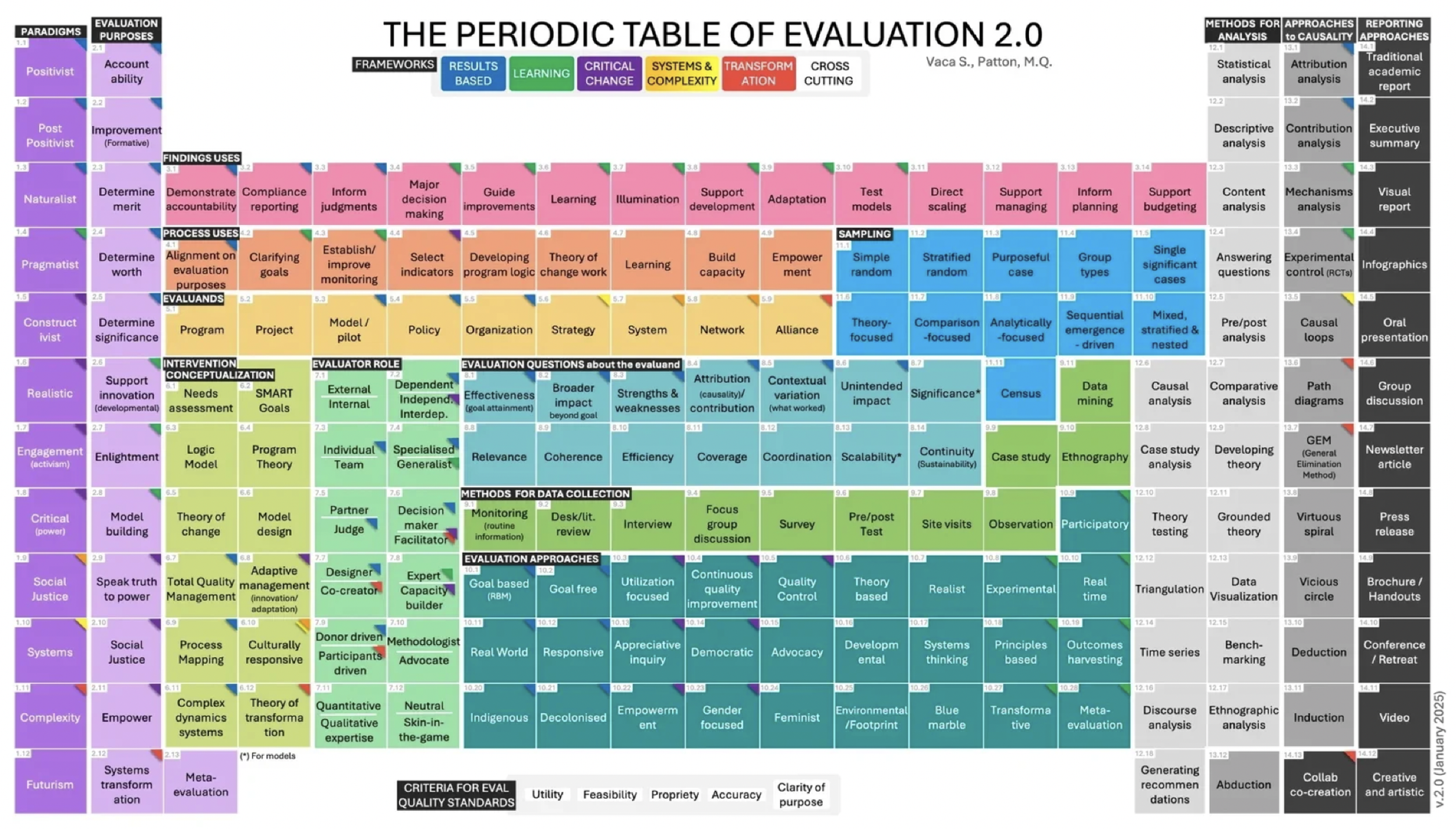

To make sense of the many ways evidence is generated and interpreted, needs-based evidence mapping draws on a conceptual tool known as the Periodic Table of Evaluation.

The idea is similar to the periodic table in chemistry. Instead of elements, it maps the different traditions, methods, and approaches used in evaluation.

The table highlights that evidence is not simply the result of a study. It is produced through an entire architecture of evaluation practice, including:

different evaluation philosophies (experimental, theory-based, participatory, developmental)

different methods of data collection (surveys, analytics, classroom observations)

different analytical approaches (statistical modelling, case study analysis, ethnography)

different approaches to causality

different reporting formats

Together, these elements form the broader ecosystem through which evidence is generated and communicated.

The table also reveals where tensions occur. One of the most prominent divides concerns causality. In the market logic, causality is often framed in terms of attribution: can we show that a specific product caused a measurable improvement? In educational research, the question is often broader: through what mechanisms and in what contexts might a tool support learning?

By making these traditions visible, the periodic table helps shift the conversation away from the simplistic question of “strong versus weak evidence.” Instead, it encourages us to ask a more useful question: what kind of evidence is needed, for whom, and for what purpose?

Translation and mutation: what happens when evidence travels

Even when evidence is carefully generated, it rarely stays in its original form. As it moves between stakeholders such as teachers, companies, policymakers, and investors, evidence undergoes processes of translation. Each actor interprets and repurposes the information to fit their own decision-making environment.

Sometimes this translation becomes more dramatic. The original meaning of evidence can change so much that it effectively becomes a mutation. A usability insight from a classroom pilot may evolve into a marketing claim about “impact”. A compliance report demonstrating legal conformity may be interpreted as evidence of broader ethical responsibility. An internal analytics dashboard might eventually appear in a public statement about learning outcomes.

These transformations are not necessarily malicious. They often occur because actors operate in different contexts with different incentives and languages.

However, they can lead to recurring frustrations across the ecosystem. Educators may distrust vendor claims, companies may struggle to understand policy expectations, and policymakers may find company-generated evidence insufficiently robust.

What appears as disagreement about evidence is often the result of misaligned translation processes rather than fundamentally opposing goals.

Toward a more coherent evidence ecosystem

Needs-based evidence mapping therefore proposes a shift in how we think about evidence in EdTech. Instead of searching for a single gold standard of proof, the approach recognises that evidence serves multiple purposes across the ecosystem. It distinguishes different domains of evidence, including learning impact, usability and pedagogical fit, safety and data governance, equity and accessibility, and scalability and sustainability.

By situating evidence within these domains and recognising the dual logic that shapes its interpretation, stakeholders can better understand why evidence is produced, how it travels, and how it should be interpreted. Ultimately, the goal is not simply to generate more evidence, but to create evidence that is meaningful, interpretable, and proportionate to the needs of those who rely on it.

In a field as complex and socially embedded as education, evidence will always involve interpretation, negotiation, and translation. But by making these processes visible, needs-based evidence mapping offers a clearer framework for building trust, aligning expectations, and supporting more responsible innovation in education.

The European EdTech Alliance’s report on the topic can be accessed here